Everdrone demonstrates the world’s most advanced visual navigation system for UAVs – powered by Intel® RealSense™ technology

Press release 13.09.2018

– A key issue with today’s UAVs is the lack of intelligent sensing systems. No one would suggest sending out self-driving cars on public roads without extremely sophisticated onboard sensing systems. The same safety logic should apply to autonomous UAVs, says Mats Sällström, CEO at Everdrone.

The system integrates with any UAV platform through the flight controller, providing features such as 360° obstacle avoidance, high accuracy optical flow navigation, and precision take-off and landing. The onboard hardware processes over 5 GB of sensor data per minute, drastically narrowing the performance gap between navigation systems for autonomous drones and self-driving cars. A video demonstration of the system was published on Everdrone’s website on September 13th 2018 (below).

At the moment, basically all usage of UAVs in open airspace are restricted to operate within line of sight from the pilot due to lack of regulatory frameworks and clear technical safety standards.

The Swedish company Everdrone is dedicated to enable safe drone operations BVLOS (beyond visual line of sight) for autonomous UAVs through development of onboard software and visual navigation systems.

It is expected that future regulations will require UAV platforms to have onboard systems for collision avoidance that can provide a layer of active safety that operates with no external dependencies. This type of system is called noncooperative collision avoidance systems.

– The ability to sense the surrounding environment in real-time and detect nearby people and ground-based obstacles is essential when developing safe autonomous UAVs, especially as we see more and more use-cases in urban areas such as emergency response and delivery services related to the healthcare sector, says Mats Sällström, CEO at Everdrone.

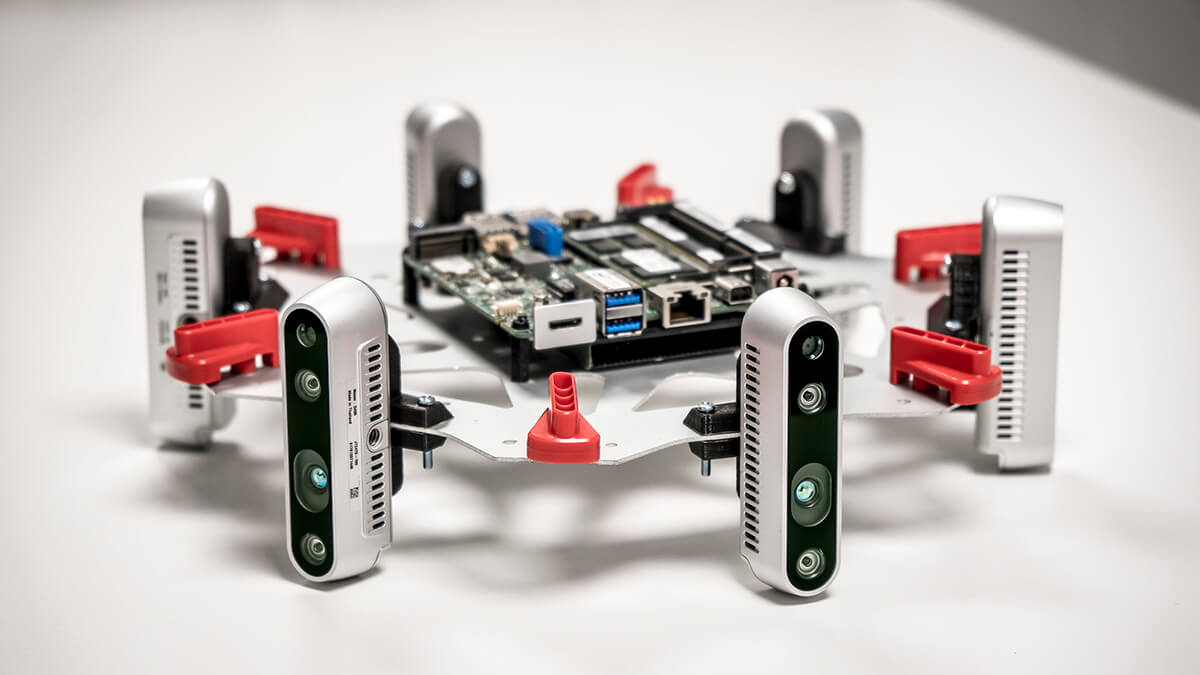

The system developed by Everdrone is enabled by high resolution depth-data collected from seven Intel® RealSense™ cameras mounted on the UAV. The onboard computer processes more than 5GB of sensor data per minute creating a detailed 3D model of the UAV’s surrounding in real-time. By combining the 3D model with optical flow capabilities and integrating with the UAV’s flight controller, the UAV is able to navigate safely and precisely around buildings and people. The system also enables use cases in GPS-denied environments, such as indoors and in urban canyons.

– The amount of highly detailed, low latency depth-information we have access to is unprecedented. This allows us to create safe, precise and responsive behaviour of the UAV. It also allows for intelligent, autonomous high-level decision making, says Maciek Drejak, CTO at Everdrone.

In the coming 12 months Everdrone intend to run pilot projects and perform more advanced testing under realistic circumstances in urban areas together with the emergency services of Gothenburg and the healthcare department at Region Västra Götaland.

VISION SYSTEM SPECIFICATIONS

Range: Up to 100 m during daylight conditions (10 m during low-light conditions)

Framerate: 10-60 Hz

Field of view: 360×90° horizontal sensors, 90×60° downward

Real-time sensor data collected per minute: 5-7